2022 Expert Survey on Progress in AI. 03 AUGUST 2022

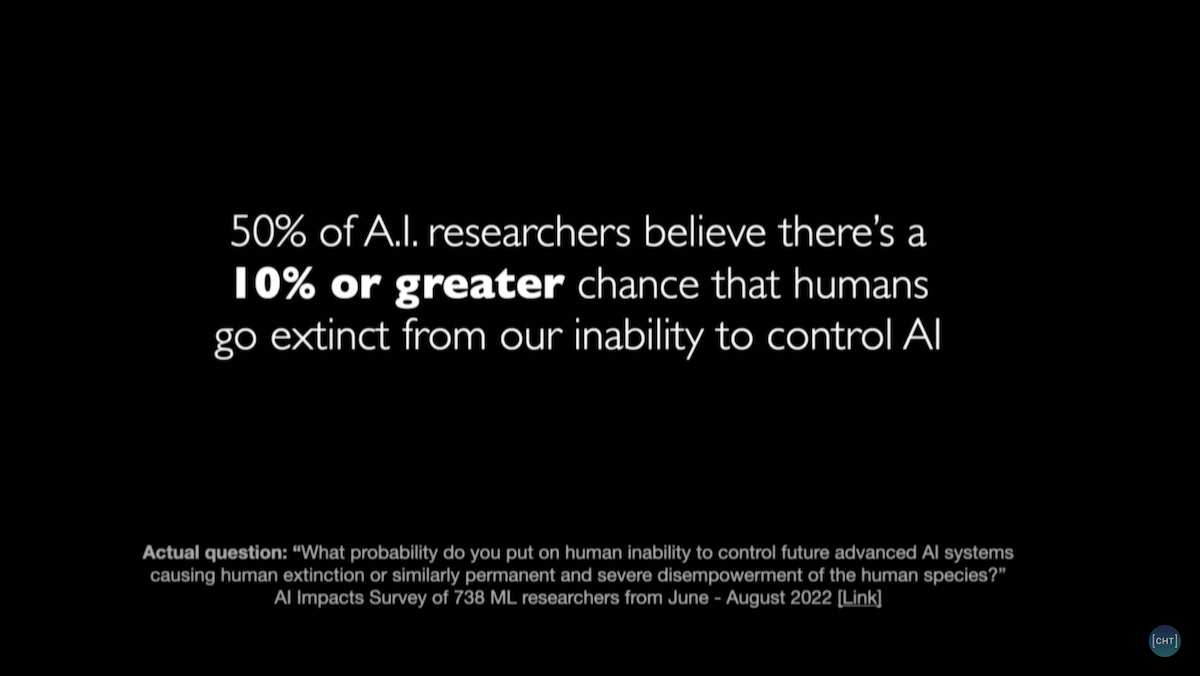

48% of respondents gave at least 10% chance of an extremely bad outcome

We contacted approximately 4271 researchers who published at the conferences NeurIPS or ICML in 2021. These people were selected by taking all of the authors at those conferences and randomly allocating them between this survey and a survey being run by others. We then contacted those whose email addresses we could find. We found email addresses in papers published at those conferences, in other public data, and in records from our previous survey and Zhang et al 2022. We received 738 responses, some partial, for a 17% response rate.

Summary of results

- The aggregate forecast time to a 50% chance of HLMI was 37 years, i.e. 2059 (not including data from questions about the conceptually similar Full Automation of Labor, which in 2016 received much later estimates). This timeline has become about eight years shorter in the six years since 2016, when the aggregate prediction put 50% probability at 2061, i.e. 45 years out. Note that these estimates are conditional on “human scientific activity continu[ing] without major negative disruption.”

- The median respondent believes the probability that the long-run effect of advanced AI on humanity will be “extremely bad (e.g., human extinction)” is 5%. This is the same as it was in 2016 (though Zhang et al 2022 found 2% in a similar but non-identical question). Many respondents were substantially more concerned: 48% of respondents gave at least 10% chance of an extremely bad outcome. But some much less concerned: 25% put it at 0%.

- The median respondent believes society should prioritize AI safety research “more” than it is currently prioritized. Respondents chose from “much less,” “less,” “about the same,” “more,” and “much more.” 69% of respondents chose “more” or “much more,” up from 49% in 2016.

- The median respondent thinks there is an “about even chance” that a stated argument for an intelligence explosion is broadly correct. 54% of respondents say the likelihood that it is correct is “about even,” “likely,” or “very likely” (corresponding to probability >40%), similar to 51% of respondents in 2016. The median respondent also believes machine intelligence will probably (60%) be “vastly better than humans at all professions” within 30 years of HLMI, and the rate of global technological improvement will probably (80%) dramatically increase (e.g., by a factor of ten) as a result of machine intelligence within 30 years of HLMI.

Extinction from human failure to control AI

Participants were asked:

What probability do you put on human inability to control future advanced AI systems causing human extinction or similarly permanent and severe disempowerment of the human species?

Answers

Median 10%.